Thursday, October 19, 2006

SPA Timesheet idea.

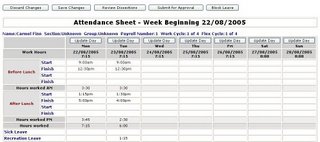

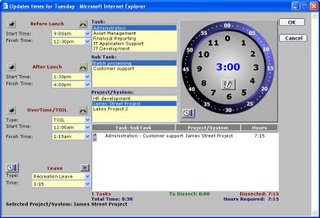

One of the products I have commercialised is a timesheeting and task dissection application, it has two production versions, Lotus Notes/Domino and a MS Asp/SQL version. I am not going to talk about it here as many of my customers are very security conscious and have non-disclosure clauses in their contracts. But lately I have been thinking about creating a very cut-down SPA ( Single Page Application) using much of the client side code from the MS Asp/SQL Version. To make it simple at first I will only implement some of the business rules from the commercial version and not worry about the task dissections.

I have two approaches I am currently looking into.

1 ) Implement on top of the tiddly wiki engine.

2 ) Go my own way and write the plumbing myself.

I am going to do some research over the coming weeks as to which approach I will take. But the basic idea of the overall thing will be.

1 ) Visit my new "SPATimesheet" website and create an account

2 ) Enter your preferences into the website, such as your working hours, whether you get overtime etc etc

3 ) The system then generates a single html page for you that you can save to pc. This page contains all of the code and data for you to run the web-based timesheet in a single page even off-line in a browser.

This is the same concept used by my friend where he created http://www.tiddlyspot.com except that site is for wikis not timesheets.

I will post any updates about this idea in future postings.

Labels: dhtml, javascript, SPA

First Oz-Korean T-shirt Arrives

Well here it is , built at www.zazzle.com and delivered yesterday. I purchased the most basic shirt that was available and it cost about A$22. I was a little concerned about the quality so I olnly ordered one, but it is really well done. I am not sure exactly how they do the screen printing process, but it is far superior to anything you could attempt at home.

Next time I will create a far more complicated design.

Oh and in case you didn't read my past posts on this, it is a shirt that says in Korean "I like to ride kangaroos" and it is for easily identifying yourself as an Aussie (호주 사람) when in Korea.

Tuesday, October 17, 2006

Happy birthday to my wife.

Triffid ??

One of my very very spiky plants in my yard is putting on a fascinating display at the moment. I have no idea what this plant is called but it seems to be growing a triffid head .

I will get worried if it also grows legs.

.Net Zipper Class

The class exposed 4 public static methods.

byte[] GZipBytetoByte(Byte[] GzippedBuffer) – Takes a gzipped byte array and returns the unzipped byte array.

byte[] BytetoGZipByte(byte[] buffer) – Takes a byte array and returns the zipped version of that byte array.

byte[] GzipfiletoByte(string filepath) – Unzips a file previously zipped by the zipper class and return a byte array of the unzipped data.

void BytetoGZipFile(string filepath, byte[] buffer) – Takes an unzipped byte array, zips it and writes it a file.

The GzipBytetoByte and BytetoGZipByte methods are useful to me because they allow for the zipping and unzipping of byte array data in memory, which means that I can compress blobs before I write them to SQL, and compress large chunks of binary before it is sent across the network.

The system.io.compression.gzipstream seems to be giving me about about 85% compression with very little overhead.

Could be in Korea early next year

Monday, October 16, 2006

Building a webstore with programmable security in .Net

This is part of a series of article I am writing on what is required to attempt the migration of Custom IBM “Domino” applications to a Microsoft Environment (.Net / SQL Server / Exchange ).

Some other posts for background work on this are :

Integrating Domino data with .Net

Building a "Domino" like data router in .Net

Another great feature of IBM Domino is that it has a web-store with programmable security built-in. This means that if I attach an Excel spreadsheet to a document in a notes database; then that spreadsheet automatically gets the security model wrapped around it that applies to the document it is attached to. As a “Domino” database is viewable from the web, it means that by simply attaching files to documents in a notes database you have created a secure web-store for those documents.

IBM Domino provides a security model that is extremely easy to programmatically manipulate. There are a couple of levels of over-arching security which aren’t really programmable, then there is

Database access

View access

Form Access

Document Access

Section Access

Most Domino developers implement data security at the Database Level and then refine that security programmatically at the document level. At the database level you use an Access Control List to specify who can access the database and specify what their maximum access level is (Manager,Designer, Editor,Author,Reader,Depositor) and which ACL Roles they belong to. This can be done on a per user or per group (or both).

At the document level the Domino Developer can add “Reader” and “Author” fields to documents containing the list of users, groups and/or ACL Roles. If a users is listed in a “Reader” field (maybe by group or ACL Role membership) then they can at least read a document, if the are in an “Author” field then they can “Edit” a document. (Access control is hierarchical, so if you are only a Reader at the Database level, then being in an “Author” field of a document still only makes you a Reader).

Changing access to a document and it’s attached files is therefore very easy. All you need to do is adjust a Reader Field and it effects who can view that document, and that also means any file attachment(s) on that document.

For instance, here is a computed formula that adjusts who can read the document based on the a field “DocStatus” which may be the current status of the document in the workflow.

@if(DocStatus=”Complete”; ”Joe Bloggs/Unit1/Company”;”[AdminSupport]”:”Sales”:”Joe Bloggs/Unit1/Company”);

This formula states that if the DocStatus field is “Complete” ( this may be a dropdown list for the user or a field set by some system process ) then only “Joe Bloggs” can read this document, otherwise anyone in the AdminSupport ACL Role, the Sales Group or Joe Bloggs can read the document.

This formula is obviously overly simple, but as the security model and data are exposed to the web via the Domino http stack, it means that in this 1 line of formula you have created a secure web-based workflow system. Any file attached to documents in this database are also contained inside the secure web-store so they too are part of the workflow. This simple example shows how quickly you can implement a secure web-based workflow system in IBM Domino.

Now how to replicate this functionality with .Net ? There have been rumours for years that Microsoft we going to release a product that had this sort of functionality “out-of-the-box”, but it has never materialised. So once again we need to code this.

If you want to allow someone access to an excel spreadsheet via the web using Microsoft products then you place it somewhere on and IIS server. If you want to restrict access you place some file level access around it. But what do you do if you want to restrict access to that spreadsheet based on where it is in a workflow? You could programmatically adjust the file system access I guess, but what if you have 100000+ records in a 20 stage workflow and 5000 users?

You could possibly use a SharePoint Server to store the files, but the access control seems to be nowhere near granular enough to do this, so I don’t think this is an option. Also I already need Exchange , IIS and SQL Server for other parts of the migration. Do I really want to add another server technology to this solution ??? Not if I can help it.

There are two components to this:

1) Determining the access a user has to a file based on some type of access control and business logic.

2) Storing the files so that they are within the programmable secure store.

Determining the Access a user has to a file based on access control and business logic.

IBM Domino provides a fully integrated security engine in the product. So there is no actual programming required to implement the security model. In .Net there are a number of ways to implement security. I use a SQL based .Net Role Provider. I am not going to go into detail about writing Role providers here as there are a number of good resources on the net, and how you implement them is determined by your application environment.

http://msdn2.microsoft.com/en-us/library/aa479032.aspx

http://weblogs.asp.net/scottgu/pages/Recipe_3A00_-Implementing-Role_2D00_Based-Security-with-ASP.NET-2.0-using-Windows-Authentication-and-SQL-Server.aspx

But to implement a web-store in .Net you will need to provide some form of usuable security provider so you can determine what the current users’ access level is to the data they are viewing.

Once you have implemented this then you should be able to write methods on your pages that follow similar logic to the code below.

protected bool CanUserPerformAction(string Action)

{

bool retval=false;

switch (Action)

{

case "Read":

//if user can access site then they can access this form retval = true;

break;

case "Edit":

if ((Roles.IsUserInRole("Administrator")) (Roles.IsUserInRole("Editor"))){

retval=true;

}

break;

case "Delete":

if (Roles.IsUserInRole("Administrator")) {

retval=true;

}

break;

case "Create":

if ((Roles.IsUserInRole("Administrator")) (Roles.IsUserInRole("Editor"))){

retval=true;

}

break;

}

return retval;

}

This method combines simple business logic ( the action trying to be performed ) and user acess to determine if the user can perform a certain action on the data. Obviously for complicated workflows this method would contain all of the business logic to determine if the current user could perform the requested action.

Storing the files so that they are within the programmable secure store.

If a file is to used inside an application then the access to that file must be controllable by that applications business logic. If you place a file on an IIS server in an area that is directly visible via http, then the control you have over the access to that file is limited. To create a fully-programmable webstore you really need to move the files outside the standard IIS space so there are not directly loadable via http from the file store. That way it is impossible for a user to load this file via http without first going through some security logic. You can either store the file on the file system (outside of IIS reach) or inside a database. For my own purposes the storing of the files inside SQL is the approach I have taken because this approach allows me to integrate with the .Net data router I have already built.

The following code is used to store files uploaded via an asp .net web form in an SQL Server database.

protected void SaveFiles(int Entryid)

{

System.Web.HttpFileCollection myfiles = System.Web.HttpContext.Current.Request.Files;

if (myfiles.Count != 0)

{

for (int iFile = 0; iFile < postedfile =" myfiles[iFile];" filename ="=" nfilelen =" postedFile.ContentLength;" myfiledata =" new" attds =" new" theatt =" AttDS." filenamearr =" postedFile.FileName.Split(@" filename =" FileNameArr[FileNameArr.GetUpperBound(0)];" inboxid =" Entryid;" contenttype =" postedFile.ContentType;" filedata =" myFileData;" attachnum =" iFile">

Once the files are in the SQL database then they are within the bounds of the application security model. The only way that the user can download/view the file is via a custom download page within the application. This page contains the security business logic (from above) to determine if a user can or cannot download/view the file. If they can then the following code is called to retrieve the file back from SQL and display to the user is as follows:

protected void DisplayFile(int Entryid){

AttachmentsDS AttDS = new AttachmentsDS();

//Attempt to retrive file from SQL via business object

AttDS = MyApp.DLC.AttachmentsDLC.GetAttachment(Entryid);

if (AttDS.Tables[0].Rows.Count == 0)

{

//there was no data returned so redirect.

Response.Redirect("~/ErrorPage?ErrNo=2392");

Response.End();

}

AttachmentsDS.AttachmentsRow dr = (AttachmentsDS. AttachmentsRow)AttDS.Tables[0].Rows[0];

Response.ContentType = dr.ContentType;

Response.AddHeader("Content-Disposition", "attachment; filename=\"" + dr.FileName + "\"");

Response.AddHeader("Content-length", dr.FileData.Length.ToString());

Response.OutputStream.Write(dr.FileData, 0, dr.FileData.Length);

}

Conclusion

Using a .Net Role Provider, some custom code and SQL server it is possible to create a secure programmable web-store for files. It is therefore possible to implement a web-based workflow system that contains attached files using this system. If this functionality is used along with the .Net data router then e-mails with attached files can automatically become part of a secure web-based workflow.

Again however this shows the amount of work required to implement something that is already built into the IBM Domino server. The creation of a SQL based role provider that suited my requirements actually took me about 3 weeks of research and coding. (This was somewhat due to the fact that I wanted the .Net SiteMap provider and the Role Provider to provide integrated security.)

In my next post ( when I get time to write it ) I will talk about implementing one of the last pieces of the system. A time scheduled and mail delivery notifier for .net to replace event driven and scheduled agents in IBM Domino.

Friday, October 13, 2006

Gifts from Korea

By some amazing fate of the korean postal system we received two separate boxes yesterday from Korea. One was from 원일(Frodo) , and the other from 소현 (Angela). Lots and lots of Korean treats from Angela, and lots of movies from Frodo, so I will have to try and find some time to watch them all and post my reviews.

Thanks very much, and hope you are both studying hard.

한비 , 생일 축하해요 !

Very Interesting Read

I have started reading “Under the loving care of the fatherly leader”. I am so far finding it very interesting. Being a product of the Australian Education System, with it’s constant fascination with the history of continents on the other side of the planet, I know very little about northern asian recent history. So far ( well at least the first 100 pages ) has given me a very interesting insight in Kim Il-Sung’s history and the events that led up to him becoming leader of DPRK. 700+ pages to go , so I am sure I will get some insight into the current developments on the Korean Penisular. If you are interested in learning about modern Korean history and/or the rise of the Kim Dynasty I recommend this book.

Thursday, October 12, 2006

Deck is progressing.

Well I have now finished the joists. I just have to put all of the decking down now. I cannot do this part early in the morning because I have to use a drop saw to cut the decking boards, and it is too loud for that time of the morning. So this will have to be a weekend job. Looking forward firing up my new BBQ for the first time on the deck. ( Just have to build that first too !!)

무궁화꽃 만발해 !

무궁화 {R:} Moo gung hwa {E:} The rose of Sharon is korea’s national flower. When I knew that I was going to be the father of a child born in South Korea, I tracked down and planted one of these plants in my yard. It is now about 4m tall. Today is the first day it started to bloom this season. It has an amazing flower that starts out pure white but by the end of the day ( 6 hours or so ) turn a bright pink. This photo I took this morning shows a newly bloomed flower next to one that bloomed a couple of hours earlier. (click on the picture to zoom-warning large pic).

Wednesday, October 11, 2006

Trying to create a “Domino” like data router in .Net

One of the greatest features of IBM Domino is that any document (record) is routable to anywhere, because the mail router and the data-engine are integrated. This means that any database can receive mail “out-of-the-box”. It is literally 1 line of code to e-mail a document from one database to another (this includes all rich-text, attachments, OLE Objects). Also Domino does not store data using a defined schema, so you can send a document from one database to another (or from an e-mail client to a database) even if the data fields that are in the document are not defined in the receiving database.

This means , for example, that I can send an e-mail from gmail/hotmail to a Domino database and it will happily store all of the e-mails contents ( SMTP data fields, MIME, Attachments ) , even though it may not even have any field definitions or forms that relate to e-mail defined in the database.

As Domino has all of these features, building an e-mail based document workflow systems with it is a breeze. (These features also mean that many people with a Relational Database background are scared of Domino).

Trying to re-create these features in Microsoft products is quite difficult, but to migrate e-mail based workflow applications from Domino to .Net (without significantly changing business practices) I need a system of this type in place. Lets look at the basic workflow design at a data level so I can better define what is trying to be achieved. At the data level an e-mail based workflow system needs be able to:

1 ) Receive an e-mail from an internal or external person/system.

2 ) Allow custom fields to be added to the e-mail so it can be workflowed ( these might be fields like “Assigned To”, “Workflow Status” , “Approvers Comments”, “Editor_Role”, “Reader_Role” etc.

3 ) Provide for this “Extended E-mail” to be routed to other databases for further workflow(s) if required.

Domino provides all of these features in a single server and a part of the core product, but with Microsoft Products I will at least need.

1 ) An Exchange server – to host the mail box that receives the e-mail.

2 ) An SQL Server – to store all of the extended data.

3 ) IIS Server – to host the .Net Application that will allow the user to interact with the data.

Already you can see there is a large install and management overhead on this; but Domino also provides 7 levels of Integrated Security “out-of-the-box”. Exchange , SQL Server , IIS and the custom .net all have separate non-integrated security models so there is also quite a lot of work setting all of this up, especially in the area of the .net application where I may need to write a custom security and role provider.(That alone is days of work).

As I said earlier Domino stores data without a schema, so adding a new field to any document can be done by the designer or user at run time. This is not true for Exchange. I am no expert on Exchange programming, but whenever I have asked anyone about adjusting the schema of the Exchange Store to add additional fields, the conversation always begins with a big long groan. If I want to migrate a workflow application then this application must be able to perform the same tasks as its previous version. Therefore it must be able to search, add new records, update existing records and delete the data in its datastore. It must also provide multiple levels of programmatically adjustable access to that data. This is certainly not something Exchange can provide so the only solution to this is to push all of the Exchange data into SQL so it can be properly integrated into the .Net Applications Environment.

Again all of these feature are provided “out-of-the-box” with Domino. Domino provides a full-text search engine (including binary attachment searching) , the ability to update/create data in the same data store where the data is indexed and routed to; and Domino provides granular, programmable security.

Every document workflow system is different so the custom data fields and business logic that are added to an e-mail once it gets into a workflow system is dependent on that systems’ requirements. At this stage I am simply trying to allow for e-mail -> datastore routing. So I will focus on:

a) Determining how to get the data from Exchange.

b) Creating a generic data schema for SQL to allow for the storage of e-mails delivered to an Exchange mail-box.

c) Creating an Exchange => SQL Server routing service to deliver the e-mails into SQL so they can then be accessed and workflowed by various .Net workflow systems. This may include multiple e-mail addresses per system.

Getting the Data from Exchange.

Exchange has a number of ways the that data can be accessed. From a development point of view I believe that WebDav is the easiest approach. This is the way that Outlook Web Access gets data from Exchange, so this is the approach I have taken. WebDav also requires no additional API, I can simply use .Net to create WebDav calls (using System.Net.HttpWebRequest) and process the xml response from the Exchange server.

The functions I need to be able to use are:

a) Get a list of e-mails in an in-box.

b) Retrieve the contents of an e-mail, including file attachments.

c) Delete an e-mail from an in-box.

As all mail is going to be routed into SQL, there is no point keeping the e-mail in Exchange, so the process will be . Look for new e-mail => retrieve and convert to SQL= > delete from Exchange.

SQL Schema

The are a large number of fields that are available from the Exchange datastore via WebDav. But for the e-mail based workflow applications I currently have in Domino I only need the following information.

a) who sent the e-mail

b) who the e-mail was to, including cc, bcc

c) when the e-mail was sent

d) the subject of the e-mail

e) the body of the e-mail

f) the file attachments of the e-mail.

WebDav also exposes a number of other fields that are useful for uniquely identifying the mail message, and threading information in the case the e-mail is part of a e-mail conversation. I decided to also included these in the schema. (Useful for e-mail enabled discussion systems)

With these data requirements I came up with two SQL tables that can be added to any SQL database to allow the router service to deliver an e-mail messages to the database from an Exchange mailbox. The SQL for these tables ( Inbox and Inbox_Attachments ) is below.

CREATE TABLE [dbo].[Inbox](

[ID] [int] IDENTITY(1,1) NOT NULL,

[Folder] [varchar](10) COLLATE Latin1_General_CI_AS NULL,

[EntryID] [varchar](255) COLLATE Latin1_General_CI_AS NULL,

[MessageID] [varchar](255) COLLATE Latin1_General_CI_AS NULL,

[DAVhref] [varchar](255) COLLATE Latin1_General_CI_AS NULL,

[DateSent] [datetime] NULL,

[FromName] [varchar](255) COLLATE Latin1_General_CI_AS NULL,

[FromEmail] [varchar](255) COLLATE Latin1_General_CI_AS NULL,

[ToEmail] [varchar](800) COLLATE Latin1_General_CI_AS NULL,

[CCEmail] [varchar](800) COLLATE Latin1_General_CI_AS NULL,

[BCCEmail] [varchar](800) COLLATE Latin1_General_CI_AS NULL,

[Subject] [varchar](255) COLLATE Latin1_General_CI_AS NULL,

[TextBody] [text] COLLATE Latin1_General_CI_AS NULL,

[hasattach] [varchar](50) COLLATE Latin1_General_CI_AS NULL,

[ConversationTopic] [varchar](255) COLLATE Latin1_General_CI_AS NULL,

[Subjectprefix] [varchar](255) COLLATE Latin1_General_CI_AS NULL,

CONSTRAINT [PK_Inbox] PRIMARY KEY CLUSTERED

(

[ID] ASC

)WITH (PAD_INDEX = OFF, IGNORE_DUP_KEY = OFF) ON [PRIMARY]

) ON [PRIMARY] TEXTIMAGE_ON [PRIMARY]

CREATE TABLE [dbo].[Inbox_Attachments](

[ID] [int] IDENTITY(1,1) NOT NULL,

[InboxID] [int],

[AttachNum] [int] NULL,

[FileName] [varchar](255) COLLATE Latin1_General_CI_AS NULL,

[ContentType] [varchar](255) COLLATE Latin1_General_CI_AS NULL,

[FileData] [image] NULL

CONSTRAINT [PK_ Inbox_Attachments] PRIMARY KEY CLUSTERED

(

[ID] ASC

)WITH (PAD_INDEX = OFF, IGNORE_DUP_KEY = OFF) ON [PRIMARY]

) ON [PRIMARY] TEXTIMAGE_ON [PRIMARY]

Building the Router Service.

The router service needs poll each Exchange mail box that it is made aware of and see if there is new mail. If there is, it needs to convert that mail into sql, run that sql against the corresponding sql database and then delete the e-mail from the mail-box.

As the polling needs to be continuous and possibly across hundreds of mail-boxes I have decided to implement this as a windows service application. The service uses a config file that contains the following information for each mail-box –sql pair.

a) The Exchange server for where the mailbox resides

b) The Exchange user name and password for connecting to the exchange mail-box via WebDav

c) The connection string to the SQL database

Using this information the service polls Exchange at a set interval to see if there is new mail in each of the mailboxes. This is achieved from .net with the following code:

//Create an XML Document to store the emails in

System.Xml.XmlDocument xmlDoc = new XmlDocument();

XmlNodeList xmlNodeList;

//Create a http request

HttpWebRequest request =(HttpWebRequest)WebRequest.Create("http://" + this.ex_server + "/exchange/" + this.ex_user + "/Inbox/");

//Set the webdav method and content type

request.Method = "PROPFIND";

//Get the data back as XML

request.ContentType = "xml";

//Put in the credentials to connect to the Exchange mailbox

request.Credentials = new NetworkCredential(this.ex_user,this.ex_password);

//Get the response in a response object

WebResponse response = request.GetResponse();

//Create a Streamreader to read the response object appropriately

System.IO.StreamReader reader =

new System.IO.StreamReader(response.GetResponseStream());

//Load the xml response data into an XML Document

xmlDoc.LoadXml(reader.ReadToEnd());

//Fill the NodeList to process the response

xmlNodeList = xmlDoc.GetElementsByTagName("a:response");

An example of the xml that is returned from the Exchange for this call is viewable here. Once the xmlNodeList is filled then the router can process the information and download each individual e-mail. I have made the source code for the routerworker class that I use to do all of the work available. Please note that I use strongly typed datasets through business objects and Microsoft Enterprise libraries for data access.

Conclusion

With this Exchange => SQL e-mail router it will now be possible to move forward in the conversion of Domino Applications to .Net. This gives me the ability to send an e-mail message to an Exchange mail-box and have it automatically converted and stored in SQL. Once these messages are in SQL, additional data can be added to them and custom business logic can act on them through .Net applications.

This work does however point out how much functionality is lost by moving away from Domino to Microsoft technologies. It also shows that to replace Domino applications requires multiple servers and development tools from Microsoft. All of this functionality is built into Domino. It take 3 servers and days and days of coding to attempt to replace it. There are still many areas that need to be covered such as replacing domino’s secure web object store, but that can be left for another post.

Despair posters

One of my favourite web sites for purchasing all kinds of geek products is http://www.thinkgeek.com/.

This site also sells “Despair” posters which I find very amusing. I actually have this one right above my desk at work. My other 2 favourites are potential and Incompetence

Monday, October 09, 2006

Korean PC Game Super-Stars.

My 5 top useful korean phrases.

2)여기에세 영어 할 줄 아는 사람 있어요 ? {R:} yo-gi-eh-so yong-o hal jul ah-nern saram iso-yo {E:} Does anyone around here speak english?

3 ) 안녕하세요{R:} An-nyong Ha-seh-yo {E:} Hello

4) 맥주 한 병 주세요 {R:} mak-ju han byong ju-seh-yo {E:} A beer please.

5 ) 화장실이 어디에요? {R:} hwa-jang-shiri oh-di-eh-yo? {E:} Where is the toilet.. ??

Friday, October 06, 2006

My First Oz-Korean Shirt Design

Here is the template for my first Oz-Korean t-shirt. The start of a new t-shirt empire !!!!! .. Well probably not, but it will certainly identify the wearer as Australian to any Korean with 200 metres.

It says “I like riding kangaroos.” I will post some action shots of someone wearing one, when I actually get around to getting one made.

Acrobatics Extraordinare

This story needs a little background. I live in Townsville in North Queensland. The Townsville Area, is in fact two cities, Townsville and Thuringowa. Although I always say I am from Townsville” but technically I live in the city of Thuringowa. I never say this because unless you live in Townsville you don’t actually know where Thuringowa is.

Anyway..

The Thuringowa city council has been moving ahead in leaps and bounds lately and has been spending (with help from the Queensland Government) a lot of money on Community Infrastructure. The latest component of this is Riverways.

“So how does this relate to Acrobats?” I hear you ask. Well last night my family went to the new theatre that is inside the Riverways Centre for the first time. We saw Acrobatics Extraordinare , and is was amazingly good. My son is 2, he can usually sit through about 30 minutes of a movie ( like Pixars’ “Cars” for instance ) before he gets bored and wants to do something else. Last night he sat for 1 and 1/2 hours in stunned silence as he watched this show. It was as if he was sufferring from sensory overload. He just sat staring with his mouth open. And of course by the end of the night he wanted to be “an Acrobat when he grows up.”

Wednesday, October 04, 2006

Deck is underway

I am building a deck to overlook my pool area, as mentioned in one of my previous posts. It is currently "in-progress" so I thought I would post a few pics.

The bearers are 190x45 F7 treated timber. The joists are 70x70 F7 treated timber at a spacing of 500mm and the decking is a treated hardword which has been pre-grooved to give it a nice grip in the wet. The whole deck is approximately 5mX4m, so a good size for a BBQ and a nice big table.

You can see all of the components in the stack as it came of the truck. I used metal footings

that bolt onto the bearers with galvanised m12x150 bolts, and the footings are sunk into 900mm of concrete. (I cheated and used pre-mix rapid set).

The image at the top is the progress so far. I am doing most of my work between 5:30am and 7:00am ( before work ). This is due to the fact that young children and power tools are not a good mix. And if my son is awake he always wants to help me out with whatever I am up to.

Programming History in a book stack

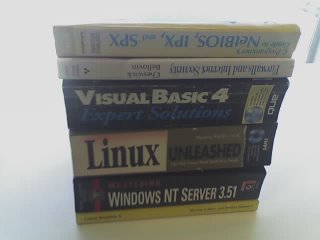

At lunch yesterday I decided to give my office a bit of a clean. I have had some old books gathering dust for some time; and it is probably time to give them away to a book exchange or see what amazingly low price I can achieve on e-bay. Such memorable titles as “C Programmer’s Guide to Netbios,IPX and SPX” ( I think I wrote an IRC over IPX with that one) , “Moving to Lotus Notes 5”, and the irony of the book entitled “Visual basic 4 - Expert Solutions”.

Aah… the memories

or maybe that should be

AAAAAAAAAAARRRRGGGGGGHHHHHHHH!!! … the MEMORIES.

당근이지 and korean on request sentences.

I think this would also be a good idea for a t-shirt, but possibly in english, to make it even more confusing.

Sometime people I meet asked me to speak korean, because they have never heard the language before. My favourite sentence to say are:

세관신고 홀 것 없어요{R:} sae-gwan-shin-go hal-ko opsoyo {E:} I have nothing to declare.

지하출 지도 있어요 ? {R:} Jee ha chul jido is-so-yo ? {E:} Do you have a subway map?

Both of the sentences are fairly useless unless of course you don’t have something to declare at customs or do need a subway map, but I think they sound interesting to a non-korean speaking person.

Markers for Translations.

한글 {R:} Romanised English {E:} English

So for example

사과{R:} sarkwa {E:} apple

Some beatiful fractals.

http://content.techrepublic.com.com/2346-10878_11-33277.html?tag=nl.e138

Monday, October 02, 2006

Javascript clock face time selection control

I was asked over the weekend about a publishing some code for a time selection control ( or time picker control) I developed some time ago when I was building a timesheeting application. Basically this is a couple of pages that allows a web developer to use a graphical clock as the user interface when someone needs to select a time. This could be the time of day or a length of time. This is much easier for the user than scrolling through a big pick-list of time choices, or trying to guess the format that a system wants for a given time field. The examples and downloadable source for this is available here

There are 5 versions:

- A standard clock control which is used to select a time from a clock face the time format is HH:MM am/pm

- Small Timer HH:MM - Used for recording lengths of time taken to do something that is always less than 12 hours. Time format is HH:MM

- Small Timer HH.mm - Same as the Small Timer HH:MM except results are in HH.mm where mm is decimal fraction of hours not actual minutes

- Large Timer HH:MM - Used for recording large lengths of time, user selects minutes from a clock face but can enter the number of hours.

- Large Timer HH.mm – Same as Large Timer HH:MM except results are in HH.mm where mm is decimal fraction of hours not actual minutes